Anatomy of an A/B Test

Intuitions on Null Hypothesis, Statistical Significance, p-Values

The Snippet is a Weekly Product Management Newsletter for aspiring Product Leaders.

When building products everyone has an opinion.

“Change this button to green and people will buy more”

“Let’s change this banner image to something else and we will see more signups”.

And while opinions galore, A/B testing is a quick and easy way to test them and see if they really hold water. Product Managers love it.

Here’s what typically goes on:

1. You have a hypothesis—say about your landing page conversions: “Changing this landing page button from Grey to Red will make people will click it more often”

2. You create 2 versions of your landing page: Version A is the original page, Version B is the variation.

3. You use a tool to Run the A/B test ….and wait for results

The tool then starts serving the control and treated landing pages randomly to your selected target audience and after a while spits out results.

And the results say - the Red button generated 5% more clicks than the other button……and the results are statistically significant.

Statistical Significance!

Most Product Managers know that when looking at the results of an A/B test, Statistical Significance is king. This thing—whatever it means— decides whether or not the experiment’s results are conclusive. If the results are statistically significant, it’s usually inferred that they are valid. Business leaders love to ask if the results are statistically significant.

In our example above, our A/B test tool does the heavy lifting and simply declares that the results are significant —so we declare victory and change the color of the button to Red. Now we better see a lift in click-throughs!

But let’s be honest. Do we really know what just happened?

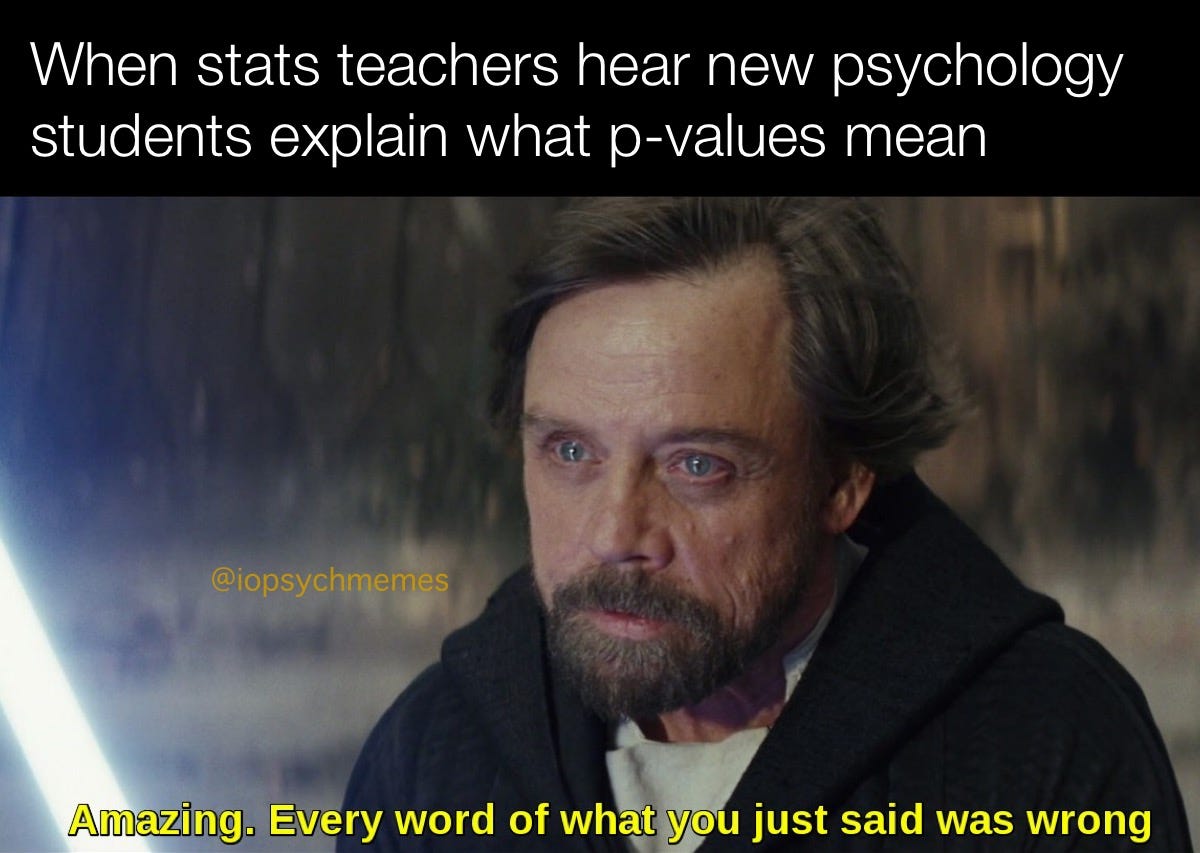

A/B testing is chronically misunderstood

A/B tests are a widely known method of experimentation employed by Product builders and Managers everywhere —and while many use it to run experiments, only a handful understand what’s really going on.

This is in part because many PMs do not come from a traditional statistics background and even the ones that do—often lose touch with the intuition behind these tests. And that’s a problem.

Many A/B tests are unfortunately set up wrong, leading unsuspecting PMs to make product decisions based on flawed premises. You are making these big product decisions but relying on tools to do the right thing.

Blindly accepting results from an A/B testing tool, without understanding what the numbers mean and how to set up the test in the first place can be detrimental to decision making.

To set up tests, and interpret results properly one needs to understand the intuition behind an A/B test and what the different constructs such as hypothesis, significance, p values, etc. really mean.

Don’t get me wrong —the Tools are great, but you know —Garbage in, Garbage out.

Understanding the intuition behind an A/B test

For the rest of this write-up, I will try to demystify what the A/B test is doing, and the intuition behind some core concepts of an A/B test. In my experience as a PM, understanding these concepts is very important to set up, run and properly infer results from A/B experiments.

My reason for writing this? I struggled with it for a long time myself. I understood the math, but intuition was missing. For being such a powerful way to experiment, it’s a shame that A/B testing is so poorly understood.

When A/B testing, think like a scientist

Guess what Scientists and Researchers do when they have a hypothesis and want to confirm if it explains a phenomenon that they observe?

They don’t prove the hypothesis that they came up with, instead, they disprove the null hypothesis.

Null Hypothesis is the most powerful concept in A/B experimentation, and yet the least understood. That’s because to most people it’s not very intuitive.

What does disproving the null hypothesis even mean?

The Null Hypothesis

What if I told you— “I think I have superpowers and I can make the sun rise every morning.”

You’ll probably say—“Nah, I don’t believe it”

Me— “Don’t believe it? Just wake up before sunrise tomorrow and see for yourself!”

You wake up early the next morning and sure enough, there’s the sun rising! Would you say it’s proof that I have superpowers?

Probably not. You’ll likely say— “ That doesn’t prove you have superpowers, the sun comes up the same every day!”.

What you just said is the NULL HYPOTHESIS. And my claim that I have superpowers is called the ALTERNATE HYPOTHESIS.

But the null hypothesis is way more important. It’s the counter to my hypothesis that I have superpowers.

So how must I prove that I have superpowers?

The only way to do that is if the Sun didn’t appear if I didn’t want it to. If that happened, then you’d probably accept that I have superpowers?

This is also how scientists work during drug trials.

Let’s say scientists have come up with a drug to fight a disease where the chance of death is 10%.

Their hypothesis is that the drug is effective in reducing patient deaths. And so, they are seeking to answer the following question

“Does this drug improves patient outcomes for this disease? Or, does it do nothing?”

The “do nothing” scenario is the NULL HYPOTHESIS.

And to definitively prove that the drug is indeed effective, scientists must disprove that it does nothing.

In other words, they must show that the null hypothesis that the drug doesn’t do anything is wrong. That’s when the rubber will really meet the road.

In Product Management land when a PM conducts an experiment to test their hypothesis

“Will a Green button drive more clicks than a red one?”

The PM must start with the null hypothesis which is

“ The Green button does nothing, it doesn’t drive any more clicks than the red one”.

And then see if the test results definitely show that the null hypothesis is wrong.

But, how do you disprove the null hypothesis?

Learning from Drug Trials

Back to our drug example.

To test a drug’s efficacy, of course, scientists set up a randomized A/B trial.

Let’s say they take 100 patients and randomly assign each person to either Group A or Group B.

(Why Random? Because randomizing is an easy way to minimize biases in these groups (such as age, gender, ethnicity, etc..). This way the groups are representative of the larger population, and the results obtained generalize better)

Anyway, the two groups A & B are re-named —one as the Control Group and the other as the Treatment group. Since both were created at random, it doesn’t really matter which is which.

And finally, The Control group is administered a placebo, and the Treatment group is administered the drug. Now they must wait for results.

Disproving the Null Hypothesis

Say after a few months, the results are in — and data shows that fewer people in the Treatment group died.

Should the scientists infer that the drug works? It certainly seems so.

If you are a scientist, that information is indeed promising, but not enough to declare victory yet. After all, what’s the guarantee that the fewer reported deaths in the treatment group are because of the drug and not because of pure chance?

That guarantee must be derived from the numbers. How many fewer deaths did the treatment group see from the placebo group?

The higher the differential, the lower the probability that the drug does not work (the Null Hypothesis).

Let’s dig a little deeper into this probability construct. We are getting into treacherous p-value territory.

p-values and Statistical Significance

p-values are perhaps the most well-known, yet the most misunderstood concept in Statistics. Of course, we know that “for statistical significance, p values should be small”, but do we know why they must be small?

Despite being such an important concept in hypothesis testing, most of us don’t have the intuition of what it exactly means.

To understand P-Values — Let’s again go back to our drug example. Here’s a quick recap of what has happened so far:

The probability that a patient dies of disease is 10% (or their chance of survival is 90%)

we have 2 groups, with 50 randomly assigned people in each group. One group was given a placebo and the other group was administered the actual drug.

Our Null hypothesis is — the drug does nothing or doesn’t improve patient outcomes.

Based on the statements above

If the drug doesn’t help at all we should see a roughly equal number of deaths in both groups. But what if we observe that no one out of the 50 patients in the Treatment group died?

If the drug doesn’t work (null hypothesis), what is the probability that we see such fantastic results? Let’s calculate it.

We know that the survival chance of each patient is 90%. so what’s the probability that all 50 patients in the treatment group will survive?

According to probability theory, it is — 90% x 90% x ……… 50 times = ~0.005. The probability of getting results this good, is the drug wasn’t working, is very very low! And this probability is called the p-value.

P-value is the probability of getting the observed results assuming the Null hypothesis is true.

Remember, P-values are always defined relative to the Null hypothesis.

Therefore the only remaining explanation for these fantastic results is that the drug actually works!

The lower the p-value, the more confidently you disprove the null hypothesis. If the p-value was ZERO, then you’d be 100% confident that the drug works. With p-Value at 0.005, your confidence level is (1-0.005) 99.5% —which by the way is also pretty damn good!

But if the p-values are HIGH, it means that the null hypothesis has not been reliably disproven. So you cannot conclude it’s the drugs that are working. It could very well be something else or just chance.

And that is the statistical significance of a p-value.

What p-Values are acceptable typically depends upon the individual situation. If you are working with human lives as in the case of drug trials, then you’d want the p-value to be as close to ZERO as possible.

On the other hand, if you are trying to find out whether a GREEN button drives more clicks than the red button higher P-values (0.05 - 0.1) may be acceptable, but not much higher than that frankly. But again, it all depends upon the context of the problem.

So Product Managers

Next time you are doing an A/B test on a Green button vs a Red button think about the following

What is your alternate hypothesis?

What’s the opposing Null Hypothesis?

What level of p-value are you comfortable with? In other words, are you OK with a p-value of 0.05 ? or must it be 0.005?

Keep using your favorite A/B experiment tools, but don’t let go of the intuition to apply the results.

Don’t prove your alternate Hypothesis, instead Disprove your Null Hypothesis!

There are some other nuances to A/B testing such as how many people you need to have in your experiment to reach significance, but let us talk about that in a future post. For now, I’ll leave you with this!

Thanks for reading,

-Abhi

The Snippet is a Weekly Product Management Newsletter for aspiring Product Leaders.

A lot of the ideas I write here are from this awesome book on how to develop your intuition on mathematical concepts and apply it to decision making. It can be a little involved, but I recommend it if you are interested in that sort of thing.

“Will a Green button drive more clicks than a red one?”

The PM must start with the null hypothesis which is

“ The Green button does nothing, it doesn’t drive any more clicks than the blue one”.

Hi Abhishek, I think the word "blue" should have been "red" based on the context.